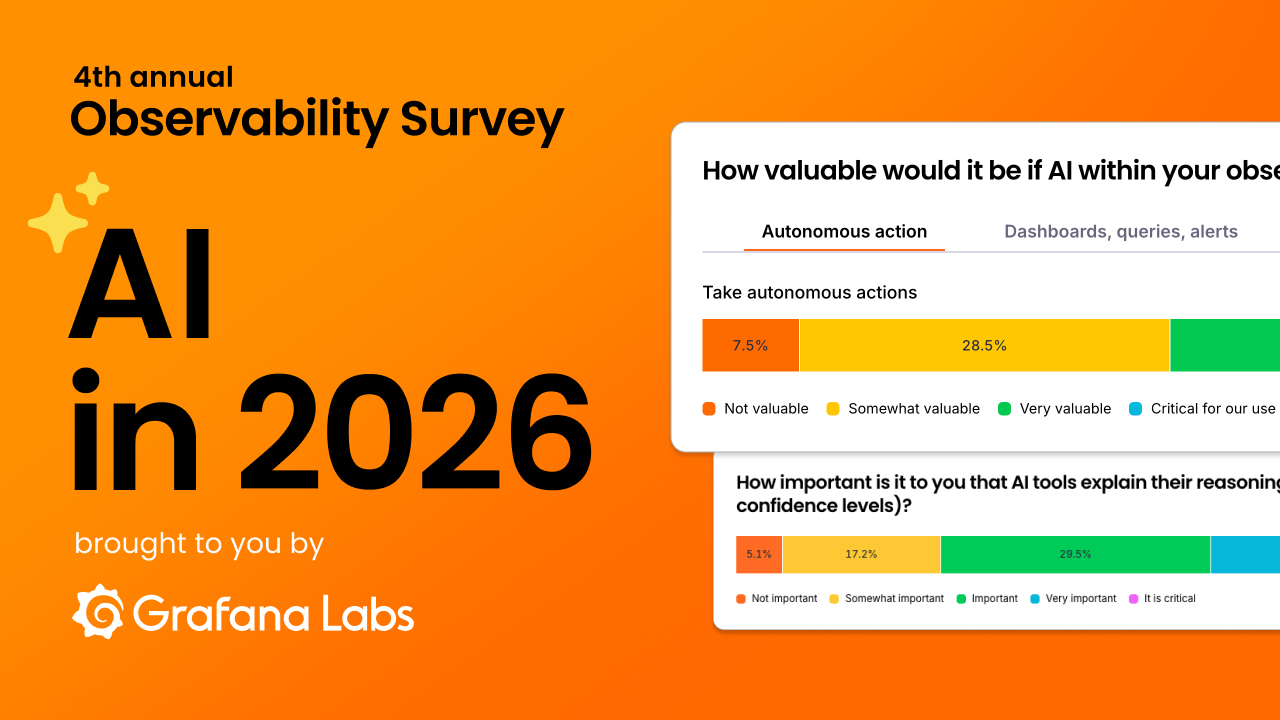

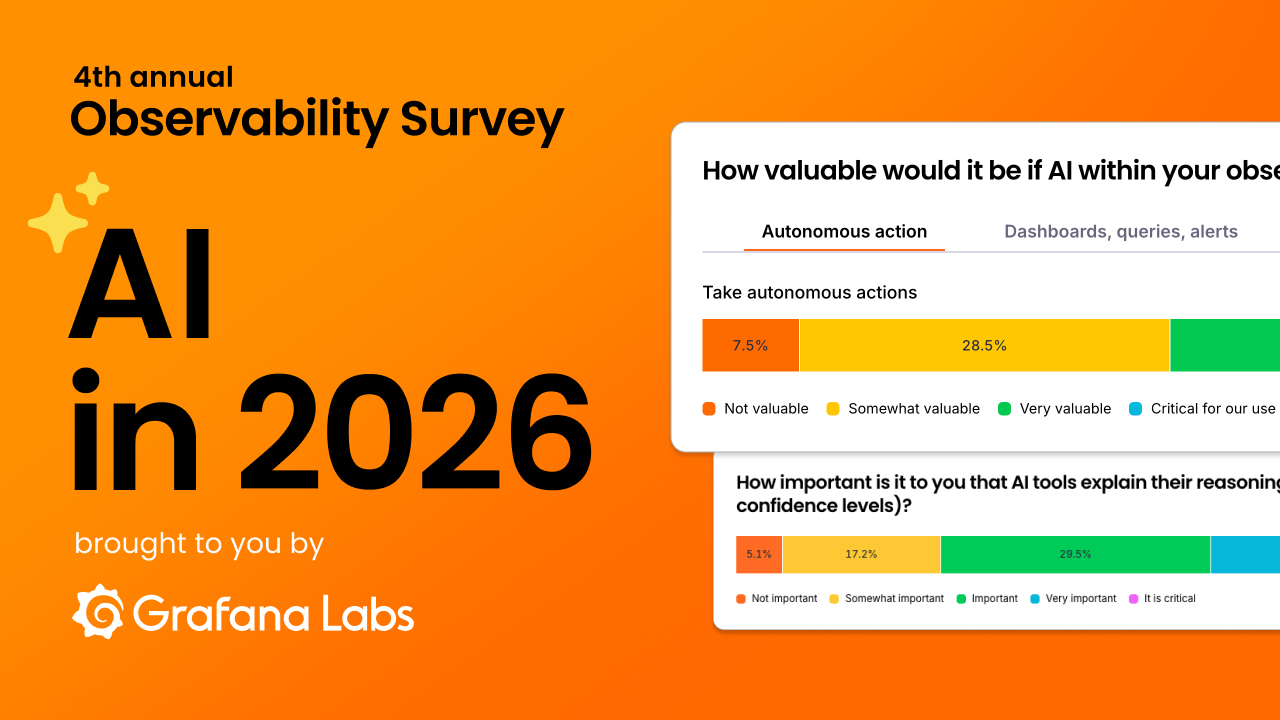

AI in observability in 2026: Huge potential, lingering concerns

Summary

Read the Original Article

This article originally appeared on Grafana Labs blog on Grafana Labs.

Read Full Article on Original Site

This article originally appeared on Grafana Labs blog on Grafana Labs.

Read Full Article on Original Site• Dec 12, 2025 • 50 views

Maurice Rochau • Apr 21, 2026 • 37 views

Christian Simon • Apr 21, 2026 • 36 views

Grafana Labs Team • Apr 6, 2026 • 35 views

Grafana Labs Team • Apr 21, 2026 • 32 views